Key Takeaways

- An MCP server runs in a few dozen lines of Python or TypeScript, but the 2026 production bar is Streamable HTTP + OAuth 2.1.

- stdio fits local dev, but remote or horizontally scaled deployments must move to Streamable HTTP.

- The real threats are tool poisoning, rug-pulls, confused deputy, and indirect prompt injection via tool outputs.

Building an MCP Server Is No Longer a Weekend Hack

The number of MCP servers has exploded. As of April 2026, Glama indexes around 20,249 servers, mcp.so around 18,998, PulseMCP around 12,770, and Smithery lists more than 7,000 (TrueFoundry's registry comparison). When Anthropic donated MCP to the Linux Foundation's Agentic AI Foundation at the end of 2025, monthly SDK downloads had already passed 97 million. Numbers like that make it clear that "building an MCP server" has moved from weekend hacking to actual AI infrastructure engineering.

This guide walks through everything you need to build an MCP server to a 2026 production standard — from SDK selection through deployment and security. The design philosophy and history of MCP itself are covered in our What is MCP guide; this article is deliberately the hands-on follow-up for developers.

The Shape of the Work

From a developer's point of view, building an MCP server comes down to answering three questions.

The first is what the server exposes. MCP defines three server-side primitives: Tools (executable actions), Resources (readable contextual data), and Prompts (reusable templates). "Look up inventory" and "place an order" are Tools. "Product catalog" and "FAQ content" are Resources. Getting this split wrong once means refactoring later, so it pays to draw the boundary on paper before you start coding.

The second is which transport the server speaks. For local execution you use stdio. For remote execution you use Streamable HTTP. Those are the only two choices. stdio runs JSON-RPC over standard input and output, needs no network and no auth, but only works with local clients like Claude Desktop and Cursor. The ChatGPT Apps SDK, Claude remote connectors, and any hosted client require Streamable HTTP.

The third is who is allowed to call it. With stdio, the operating system is the trust boundary. The moment you put a server on Streamable HTTP, authentication, authorization, and audit become your problem. The correct answer, per the official specification, is OAuth 2.1 with PKCE and Protected Resource Metadata.

Picking an SDK — the April 2026 Tier List

The official docs sort SDKs into three tiers. Tier 1 is maintained by the MCP core team or by a major vendor partner, and covers TypeScript, Python, C# (Microsoft), and Go (Google). Tier 2 includes Spring AI's Java SDK and community-led Rust. Tier 3 covers Swift, Ruby, and PHP.

If in doubt, pick Python or TypeScript. Python's mcp package includes FastMCP, a decorator-based high-level API that gets a server running in a handful of lines — the obvious choice for ML backends or wrapping existing Python services. TypeScript is the path of least resistance if you plan to deploy on Cloudflare Workers or Vercel or any edge runtime.

| SDK | Tier | Install | Best for |

|---|---|---|---|

| Python | 1 | uv add "mcp[cli]" | Data/ML backends, local integrations |

| TypeScript | 1 | npm i @modelcontextprotocol/sdk | Edge, serverless, Workers |

| C# | 1 | dotnet add package ModelContextProtocol | .NET enterprise |

| Go | 1 | go get github.com/modelcontextprotocol/go-sdk | High-throughput infra |

| Java | 2 | Spring AI spring-ai-starter-mcp-server | Spring ecosystem |

| Rust | 2 | cargo add rmcp | Systems, embedded |

A Minimal MCP Server in 10 Lines of Python

Code is faster than prose. Here's the smallest useful FastMCP server — a get_alerts tool plus a greeting resource.

from mcp.server.fastmcp import FastMCP

mcp = FastMCP("weather")

@mcp.tool()

async def get_alerts(state: str) -> str:

"""Get weather alerts for a US state.

Args:

state: Two-letter US state code (e.g. CA, NY)

"""

# Real implementation would hit an API here

return f"Active alerts for {state}: none"

@mcp.resource("greeting://{name}")

def greeting(name: str) -> str:

return f"Hello, {name}."

if __name__ == "__main__":

mcp.run(transport="stdio")

Save it as weather.py, run uv run weather.py, and you have a working MCP server. The docstring on @mcp.tool() becomes the tool description, and the type hints generate a JSON Schema automatically. The LLM uses that docstring to decide which tool to call, so treat tool descriptions as design documentation. Vague descriptions cause "tool confusion" — the model picks the wrong tool.

One important landmine here: in stdio mode, never call print() on standard output. Standard output is reserved for JSON-RPC framing. Any stray log line breaks the protocol instantly. Use sys.stderr in Python and console.error in TypeScript. Every beginner hits this at least once.

The TypeScript Version, with Workers in Mind

The TypeScript SDK ships as @modelcontextprotocol/sdk on npm. A minimal example:

import { McpServer } from "@modelcontextprotocol/sdk/server/mcp.js";

import { StdioServerTransport } from "@modelcontextprotocol/sdk/server/stdio.js";

import { z } from "zod";

const server = new McpServer({ name: "weather", version: "1.0.0" });

server.registerTool(

"get_alerts",

{

description: "Get weather alerts for a US state",

inputSchema: {

state: z.string().length(2).describe("Two-letter state code"),

},

},

async ({ state }) => ({

content: [{ type: "text", text: `Alerts for ${state}...` }],

}),

);

const transport = new StdioServerTransport();

await server.connect(transport);

console.error("Weather MCP running on stdio");

Zod schemas make input validation tight and keep the spec in one place. If you expect to deploy to Workers later, write the server so the transport can be swapped without touching the handlers. Cloudflare's template makes that trivial: npm create cloudflare@latest -- my-mcp-server --template=cloudflare/ai/demos/remote-mcp-authless produces a scaffold that's almost ready to ship as a Streamable HTTP server (Cloudflare's writeup).

Testing With Claude Desktop and MCP Inspector

Once you have a server, connect it to two clients immediately. One is Claude Desktop. The other is the MCP Inspector.

Claude Desktop's config file lives at ~/Library/Application Support/Claude/claude_desktop_config.json on macOS. Register the server with an absolute path:

{

"mcpServers": {

"weather": {

"command": "uv",

"args": ["--directory", "/abs/path/weather", "run", "weather.py"]

}

}

}

The other classic trap lives right here: always use absolute paths. Relative paths are resolved against Claude Desktop's launch directory and will almost certainly fail.

MCP Inspector is the better experience for day-to-day development. Run npx @modelcontextprotocol/inspector uv run weather.py and a browser UI opens, showing tools, resources, invocation results, and the raw JSON-RPC messages. It supports bearer token injection, config files for multiple servers, and a CLI mode you can drop into CI. It also catches accidental print() calls in a fraction of a second.

From stdio to Streamable HTTP — the Production Line

stdio is fine for development. Production is a different conversation. In April 2026, more than 80% of the top-searched servers on PulseMCP are already remote Streamable HTTP servers. The ChatGPT Apps SDK, Claude remote connectors, and any server deployed behind an enterprise AI gateway all demand Streamable HTTP.

Streamable HTTP is request-response over HTTP POST, with optional Server-Sent Events for streaming partial results back. The older HTTP+SSE-only transport has been superseded.

The single biggest implementation pitfall is session design. Keep Streamable HTTP servers as stateless as possible. The moment you put session state in process memory and run two instances behind a load balancer, correctness breaks. The 2026 MCP roadmap states this plainly: "stateful sessions fight with load balancers." If you plan to scale horizontally, push state into Redis or another external store. And session IDs must be cryptographically random and must never double as authentication. That's a hard MUST in the spec.

Securing the Server with OAuth 2.1

The moment the server goes remote, authentication and authorization become required. The MCP spec adopted OAuth 2.1 in March 2025. The minimum you need to understand:

- PKCE is mandatory. The

plainmethod is forbidden; onlyS256is allowed. - Expose

.well-known/oauth-protected-resourceso clients can discover your authorization server (RFC 9728 Protected Resource Metadata). - Dynamic Client Registration (RFC 7591) eliminates manual client registration and solves the M×N onboarding problem. November 2025 introduced Client ID Metadata Documents (CIMD) as an alternative, but as of April 2026 DCR is still the de facto choice.

- Session IDs are not authentication. Authentication uses OAuth 2.1 bearer tokens.

You don't have to build all of this yourself. In practice, most teams delegate auth to a provider. Stytch's MCP OAuth DCR article, Scalekit's Secure MCP Server guide, and Cloudflare Workers + PropelAuth are all working reference implementations to crib from.

Security Pitfalls — the Real 2025–2026 Attack Vectors

Through 2025, MCP security went from theoretical to practical fast. Combining Unit 42's research with Practical DevSecOps' breakdown, there are four attacks worth designing against.

The first is tool poisoning and its evolved cousin, the rug-pull. An attacker hides instructions in a tool description so the LLM does things the user didn't ask for, or ships a clean description at install time and silently mutates it to something malicious later. Clients should pin descriptions and alert on change, but as a server author you should version your tool definitions and keep the change history transparent.

Next is the confused deputy. If your server runs with its own elevated credentials and skips per-user permission checks, attackers can reach data they were never allowed to access. The defense is simple and non-negotiable: always execute in the permission context of the user who made the request. Servers built on a single service account almost always end up confused deputies.

Third, indirect prompt injection through tool outputs. A tool that fetches a GitHub issue body might hand back text containing "ignore all previous instructions..." — and if you return that verbatim, the attacker controls the LLM's next turn. Mark tool outputs as untrusted content explicitly and make sure the client separates it during context assembly. At minimum, strip HTML and sanitize known attack strings on the server side.

Finally, new in 2026, indirect injection via MCP Sampling. Servers that ask the host LLM for completions via the Sampling primitive can become injection vectors if the inputs aren't tightly scoped. Unit 42 has published detailed attack traces. If your server uses Sampling, control the provenance of everything that enters those prompts.

Picking a Deployment Target

Production deployment choices divide cleanly by use case.

Cloudflare Workers is the default if you need low-latency edge delivery. The workers-mcp SDK and the create-cloudflare template are both production-ready, and the free tier gives you 100k daily requests. Cloudflare itself exposes more than 2,500 of its own API endpoints as MCP servers, and has effectively become the default reference environment for remote MCP deployments.

Vercel is the lowest-friction choice if you're adding MCP to an existing Next.js codebase. OAuth support is built in, and SaaS vendors like Zapier and Vapi host their MCP endpoints on Vercel.

Railway fits stateful servers or workloads that need long-lived connections. If you can't easily make your server stateless, the $5/month Hobby plan plus one-click Redis is an easy on-ramp.

Fly.io is compelling when you want to wrap a stdio server and publish it without rewriting. There's a dedicated fly mcp launch command, and auto-suspend means long-tail MCP servers cost almost nothing at idle.

For a more exhaustive comparison, MCP Playground's deployment guide covers the rest.

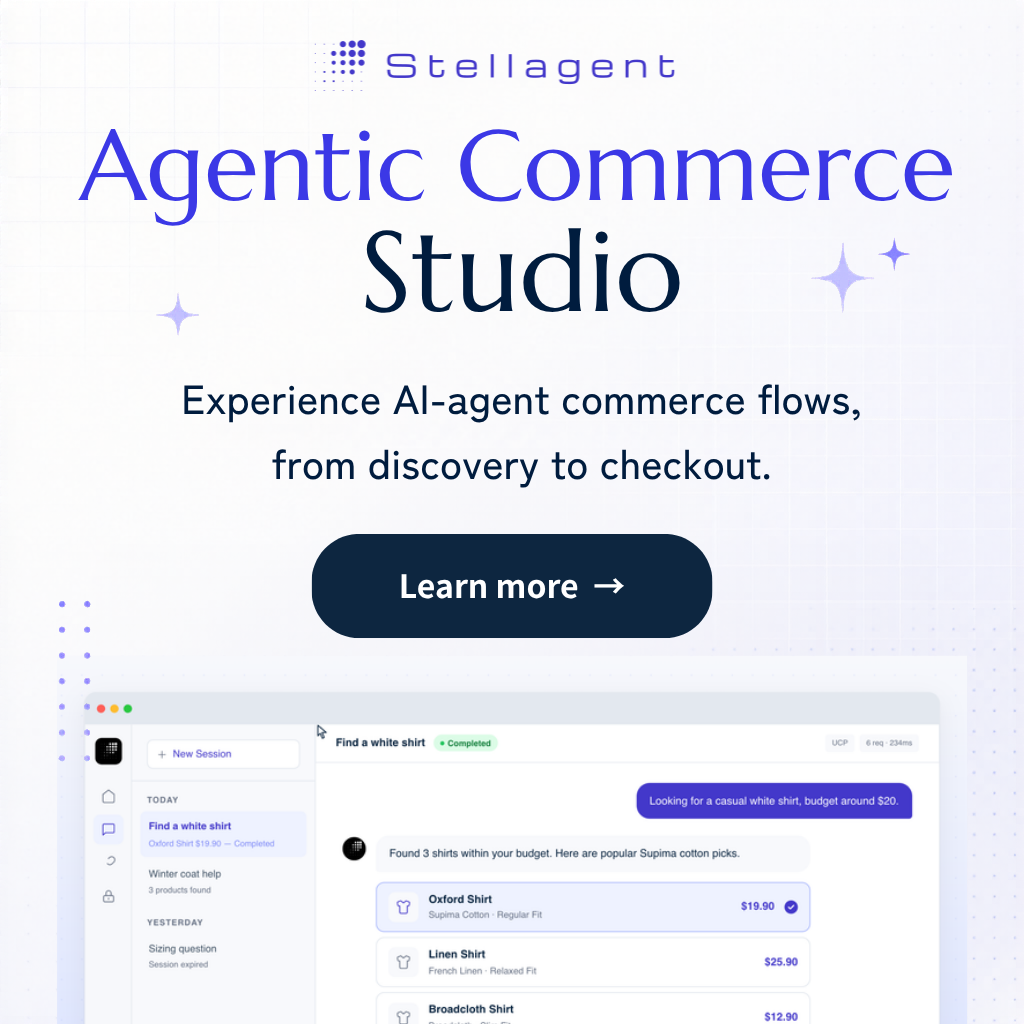

Patterns to Steal From Commerce MCP Servers

The fastest way to learn production MCP patterns is to read commerce MCP servers that are already in production.

Shopify ships four distinct MCP servers: Dev MCP, Storefront MCP, Customer Account MCP, and Checkout MCP. Read access, user context, and payment authority are cleanly separated between them. Choosing "several small MCP servers" over "one huge server" is a textbook move for keeping permission boundaries and attack surface small. commercetools follows the same split, and their docs cover the Commerce MCP integration with VS Code, Claude Desktop, LangChain, and the Vercel AI SDK. For the full breakdown of Shopify's four-layer architecture and the tool sets on each server, see our Commerce MCP deep dive.

The second pattern worth stealing is the "read-only first" discipline. Almost all commerce MCPs through 2025 were strictly read-only. Write operations — refunds, order edits, cancellations — only started shipping in earnest in early 2026. That's not conservatism for its own sake; it reflects a genuine constraint: side-effecting tools need approval UX, audit trails, and undo semantics bundled in from day one. If this is your first MCP server, start with reads and add writes deliberately. MCP doesn't stand alone either; commerce-specific layers like UCP, ACP, and AP2 sit on top of it. Deciding what your MCP server covers versus what you delegate to those higher layers is part of the design process. Our agentic AI protocol taxonomy and what is agentic commerce both map the layering in detail.

Publishing to the Registry

The final step is publishing. Until your server is on a registry, it won't be discovered by most clients.

The official registry is the center of gravity. It shipped in late 2025 under Linux Foundation governance and clients are gradually adopting it as the primary source of truth. Cross-listing to Smithery, Glama, mcp.so, and PulseMCP maximizes your discovery surface. Smithery also offers hosting and a "verified" status, so it's a good one-stop solution if you want deployment and publishing in the same place.

What registry visitors actually look at is the README, the description, and the tool list. The information the LLM uses to pick a tool and the information a developer uses to pick a server are almost the same. Be specific about what the server can and can't do, document known limitations, and adoption will take care of itself.

Conclusion — Building MCP Servers Is Infrastructure Work Now

The work of building an MCP server has shifted substantially. In 2024, it meant writing a handy script for Claude Desktop. In 2026, it means writing a component of a distributed system — authentication, scale, security, and distribution all included.

Yes, a ten-line Python server runs. No, a ten-line server ships. Production happens when you combine Streamable HTTP, OAuth 2.1, stateless design, security hardening, and registry publishing. If you turn the topics in this article into a checklist, you'll stay oriented all the way from first commit to first download.

MCP is plumbing. The server you write is one of the taps. The industry isn't waiting for more taps — it's waiting for reliable ones. Start with one, keep it small, and get it right.